cloud automation

What is cloud automation?

Cloud automation is a broad term that refers to processes and tools that reduce or eliminate manual efforts used to provision and manage cloud computing workloads and services. Organizations can apply cloud automation to private, public and hybrid cloud environments.

Why use cloud automation?

Traditional deployment and operation of enterprise workloads is a time-consuming and manual process. This often involves repetitive tasks, such as the following:

- sizing, provisioning and configuring resources such as virtual machines (VMs);

- establishing VM clusters and load balancing;

- creating storage logical unit numbers (LUNs);

- invoking virtual networks;

- making the actual deployment; and

- monitoring and managing availability and performance.

Although each of these repetitive and manual processes is effective, they are inefficient and often fraught with errors. These errors can lead to troubleshooting, which delays the workload's availability. They might also expose security vulnerabilities that can put the enterprise at risk.

With cloud automation, an organization eliminates these repetitive and manual processes to deploy and manage workloads. To achieve cloud automation, an IT team needs to use orchestration and automation tools that run on top of its virtualized environment.

This article is part of

What is cloud management? Definition, benefits and guide

What are types of cloud automation?

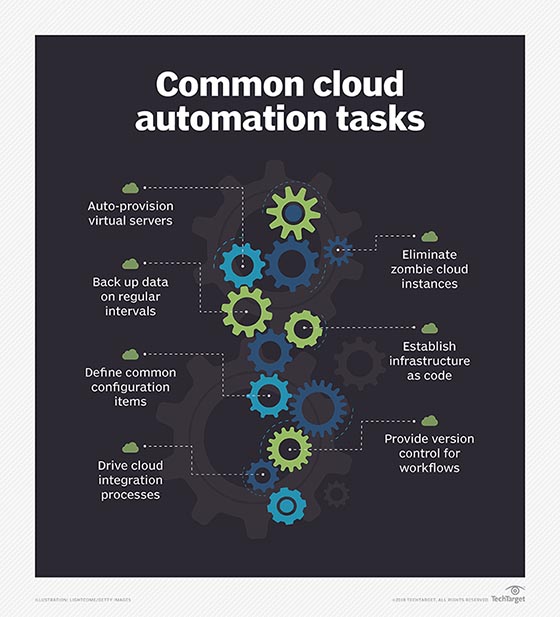

Automating various tasks in the cloud removes the repetition, inefficiency and errors inherent with manual processes and intervention. Typical examples include the following:

- Resource allocation. Autoscaling -- the ability to scale up and down the use of compute, memory or networking resources to match demand -- is a core tenet of cloud computing. It provides elasticity in resource usage and enables the pay-as-you-go cloud cost model.

- Configurations. Infrastructure configurations can be defined through templates and code and implemented automatically. In the cloud, opportunities for integration increase with associated cloud services.

- Development and deployment. Continuous software development relies upon automation for various steps, from code scans and version control to testing and deployment.

- Tagging. Assets can be tagged automatically based on specific criteria, context and conditions of operation.

- Security. Cloud environments can be set up with automated security controls that enable or restrict access to apps or data, and scan for vulnerabilities and unusual performance levels.

- Logging and monitoring. Cloud tools and functions can be set up to log all activity involving services and workloads in an environment. Monitoring filters can be set up to look for anomalies or unexpected events.

What are the benefits of cloud automation?

When implemented properly, cloud automation offers many benefits, such as the following:

- it saves an IT team time and money;

- it is faster, more secure and more scalable than manually performing tasks;

- it leads to fewer errors, as organizations can construct more predictable and reliable workflows; and

- it contributes directly to better IT and corporate governance.

Cloud automation also enables IT teams, freed from repetitive and manual administrative tasks, to focus on higher-level work that more closely aligns with an organization's business needs, such as integrating higher-level cloud services or developing new product features.

What are the differences between cloud automation and cloud orchestration?

Cloud automation invokes various steps and processes to deploy and manage workloads in the cloud with minimal or no human intervention. Cloud orchestration describes how an administrator codifies and coordinates those automated tasks to occur at specific times and in specific sequences for specific purposes.

Cloud automation and orchestration are complementary and codependent. No orchestration process is entirely manual, and automated tasks are by nature part of an orchestration process.

Consider regularly scheduled data backup and recovery using the cloud. IT staff uses a tool natively from the cloud platform provider or a third party to plan a sequence of tasks based on logical events, such as time of day or discovery of error codes. This entire process from start to finish represents cloud orchestration. Individual parts of the backup process are automated, such as the actual data backup and notifications that the process was successful. If error codes are discovered, another orchestration of processes kicks off to alert staff to switch to take corrective action to repeat or manually complete the backup, and to troubleshoot what went wrong.

Cloud automation use cases

While cloud automation tools or frameworks all share the same general goal, use cases vary widely, depending on the particular business and its goals. Some basic examples of cloud automation include the following:

- auto-provision cloud infrastructure resources;

- shut down unused instances and processes (mitigating sprawl); and

- perform regular data backup.

Another common use case for cloud automation is to establish infrastructure as code. Cloud platforms typically discover and organize compute resources into pools. This enables users to add and deploy more resources without concern for where those resources are physically located in the data center.

Cloud automation processes and tools can draw from these resource pools to define common configuration items, such as VMs, containers, storage LUNs and virtual private networks. Then, they can load application components and services, such as load balancers, onto those configuration items, or create instances using templates or cloned VMs or containers. Finally, those items are assembled to construct a more complete operational environment for a workload deployment.

For example, a cloud automation template could create a certain number of containers for a microservices application, load the software components into the container clusters, connect storage and a database, configure a virtual network, create load balancers for the clusters and then open the workload to users.

In addition to deployment, cloud automation relates to workload management. For example, IT staff can configure an application performance management tool to monitor the deployed workload and its performance. Alerts trigger automatic scaling tasks, such as add more containers to a load-balanced cluster to improve performance, or remove excess container instances to pare down resource usage.

Cloud automation is a central element of workload lifecycle management. Workloads in the cloud are typically long-term entities, but some of their individual components, such as scaled containers, may be ephemeral. Admins can use cloud automation to remove them, along with their configuration items, when they're no longer needed.

Cloud automation can also play a role in hybrid clouds, to automate tasks in a private cloud environment (such as an OpenStack-based framework) and drive integration with public clouds such as Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform.

Cloud automation is also vital for busy app developers. Agile development methods, such as continuous integration, continuous delivery and continuous deployment, and DevOps, all depend on rapid resource deployment and scaling to test new software releases. Once the testing is finished, those resources are released for reuse. Public clouds are adept at this behavior, and cloud automation tools can bring the same capabilities to private clouds.

Lastly, cloud automation can provide version control for workflows, allowing organizations to demonstrate consistent setups that stand up to business and regulatory auditing. The business can see exactly which resources are currently in use, identify which users or departments use them, predict how resources will be used in the future and ensure a level of service quality that is impossible with manual processes.

Cloud automation tools

There is no single cloud automation tool, platform or framework. A myriad of different tools and platforms can be used to automate one task or many, ranging from on-premises tools for private clouds to hosted services from public cloud providers.

Examples of automation services from public cloud providers include the following:

- AWS Config, AWS CloudFormation, AWS EC2 Systems Manager;

- Microsoft Azure Resource Manager, Azure Automation;

- Google Cloud Composer, Cloud Deployment Manager; and

- IBM Cloud Orchestrator.

Configuration management tools offer many cloud automation capabilities, particularly with an infrastructure-as-code setup. Examples include the following:

- Red Hat Ansible

- Puppet Enterprise

- Chef Automate

- Salt/SaltStack

- HashiCorp Terraform

Other orchestration tool options include Broadcom (CA Technologies) Automic and Cloudify Orchestration Engine and Workflow Engine.

Many multi-cloud management vendors incorporate automation capabilities into their tools. Some prominent ones are:

- VMware

- CloudBolt

- CloudSphere (Hypergrid)

- Snow (Embotics)

- Morpheus Data

- Scalr

- Flexera (RightScale)