What is a cloud server?

A cloud server is a virtual server that operates in a cloud computing environment and makes its resources accessible to users remotely over a network. Cloud-based servers are intended to provide the same functions, support the same operating systems and applications and offer similar performance characteristics as traditional physical and virtual servers that run in a local data center.

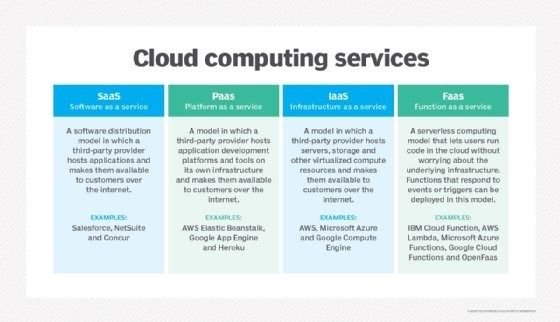

Cloud servers are an important part of cloud computing technology. Widespread adoption of server virtualization has largely contributed to the rise and continued growth of utility-style cloud computing. Cloud servers power every type of cloud computing deployment model, from infrastructure as a service (IaaS) to platform as a service (PaaS) and software as a service (SaaS).

Cloud servers are often referred to as virtual servers, virtual private servers or virtual platforms.

How do cloud servers work?

Cloud servers work by virtualizing physical servers to make them accessible to users from remote locations. Server virtualization is often but not always done through the use of a hypervisor. The compute resources of the physical servers are then used to create and power virtual servers, which are also known as cloud servers. These virtual servers can then be accessed by organizations through a working internet connection from any physical location and resources are allocated to users on a need basis. Cloud servers are provisioned and managed through cloud-based application programming interfaces (APIs).

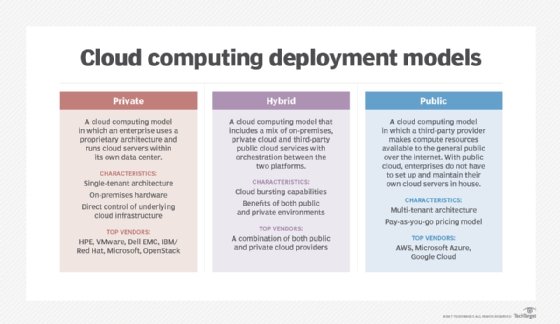

In a public cloud computing model, cloud vendors provide access to these virtual servers, storage and other resources or services in exchange for fees that are typically structured as a pay as you go (PAYG) subscription model.

Cloud deployment models generally fall into the following main architectures:

- IaaS. Cloud computing models that include only traditional infrastructure elements such as virtual servers, storage and networking are called IaaS.

- PaaS. PaaS products provide customers with a cloud computing environment with software and hardware tools for application development, which are powered by cloud servers, storage and networking resources.

- SaaS. In the SaaS model, the vendor delivers a complete, fully managed software product to paying customers through the cloud. SaaS applications rely on cloud servers for compute resources.

- FaaS. Function as a service is a cloud computing model that enables developers to execute code in response to specific events, such as HTTP requests, database updates or file uploads, without the need to manage servers. Although private cloud servers work similarly, these physical servers are part of a company's private, owned cloud infrastructure.

Key differences between a cloud server and a traditional server

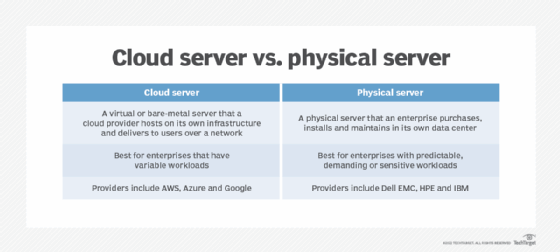

The choice between a cloud server and a traditional physical server often depends on an organization's specific needs, resources and growth plans. Although use of cloud servers for computing tasks can offer customers many specific benefits compared to physical servers, certain use cases can favor traditional on-premises servers.

The key differences between a cloud server and a traditional server include the following:

- Location and management. Cloud servers are maintained and hosted by cloud server providers and are accessed remotely via a remote network or the internet. Their physical infrastructure is managed in the provider's off-site data centers. Traditional or dedicated servers are physically located on-site within an organization and the in-house IT staff is responsible for their maintenance, upgrade and troubleshooting.

- Scalability. Cloud server resources can be dynamically adjusted according to demand, enabling businesses to scale computing power up or down in real time. For traditional servers, scaling often requires purchasing and installing additional equipment and hardware, which can be time-consuming and costly.

- Workloads. Cloud servers are generally ideal for organizations with variable workloads, while traditional servers are better suited for enterprises with consistent and high-demand workloads.

- Reliability. Cloud servers typically have built-in redundancy, load balancing and failover options, which enhances reliability. For traditional servers, reliability depends on the organization's infrastructure and additional setups are often required to achieve redundancy.

- Cost structure. Cloud servers typically follow a PAYG model, where businesses only pay for the consumed resources. Traditional physical servers require upfront capital investment for hardware and ongoing maintenance costs.

Types of cloud servers

An enterprise can choose from several types of cloud servers. Three primary models include the following:

Public cloud servers

The most common expression of a cloud server is a virtual machine (VM), or compute instance, that a public cloud provider hosts on its own infrastructure and delivers to users across the internet using a web-based interface or console. This model is known as IaaS.

Public cloud servers typically use prefabricated instances, which assign a known number of virtual central processing units (vCPUs) and memory. Examples of cloud servers include Amazon Elastic Compute Cloud (EC2) instances, Microsoft Azure instances and Google Compute Engine instances.

Private cloud servers

A cloud server can also be a compute instance within an on-premises private cloud. In this case, an enterprise delivers the cloud server to internal users across a local area network (LAN) and, in some cases, also to external users across the internet.

The primary difference between a hosted public cloud server and a private cloud server is that the latter exists within an organization's own infrastructure, whereas a public cloud server is owned and operated outside of the organization.

Private cloud servers can rely on prefabricated instances but also enable users to select a desired amount of vCPU and memory resources to power the instance. Hybrid clouds can include public or private cloud servers.

Dedicated cloud servers

In addition to virtual cloud servers, cloud providers can supply physical cloud servers, also known as bare-metal servers, which essentially dedicate a cloud provider's physical server to a user. These dedicated cloud servers, also called dedicated instances, are typically used when an organization must deploy a custom virtualization layer or mitigate the performance and security concerns that often accompany a multi-tenant cloud server.

Cloud servers offer a wide range of compute options, with varying processor and memory resources. This enables an organization to select an instance type that best fits the needs of a specific workload. For example, a smaller Amazon EC2 instance might offer one vCPU and 4 gigabytes of memory, while a larger Amazon EC2 instance provides 96 vCPUs and 384 GB of memory. In addition, it is possible to find cloud server instances that are tailored to unique workload requirements, such as compute-optimized instances that include more processors relative to the amount of memory, or instances that incorporate graphics processing units, or GPUs, for math intensive workloads.

Although it's common for traditional physical servers to include some storage, most public cloud servers do not include storage resources. Instead, cloud providers typically offer storage as a separate cloud computing service, such as Amazon Simple Storage Service (Amazon S3) and Google Cloud storage. An organization provisions and associates storage instances with cloud servers to hold content, such as VM images and application data.

Features of cloud servers

Cloud servers come with various key characteristics:

- Diverse infrastructure. Based on the use cases and requirements of the cloud computing environment, the computing infrastructure of cloud servers can be physical, virtual or both.

- Self-service provisioning. Cloud servers typically offer self-service provisioning to help users deploy and manage resources by bypassing IT support. This streamlined approach enables users to quickly set up servers and applications on-demand and provide faster response times to changing business needs.

- Reliability. Cloud servers run on multiple servers, so if one server fails, its computing resources are automatically failed over to the other servers in the pool.

- Serviced through an API. Services on a cloud server are accessed on demand via an API that enables communication between different software applications and services. APIs facilitate provisioning, scaling and monitoring of resources, which makes it easier for developers and businesses to build, deploy and manage applications in the cloud.

- PAYG model. When using cloud services, businesses only pay for the resources they consume on a pay-as-you-go basis. The pay-as-you-go model provides greater savings compared to the upkeep and maintenance of traditional physical servers.

- High availability. Cloud servers are highly available. This is because cloud providers allocate servers across multiple isolated data centers and availability zones, employing advanced failover mechanisms to ensure continuous uptime.

- Compliance and security. Cloud servers invest heavily in compliance and certifications as well as advanced security measures such as firewalls, intrusion detection and prevention systems, data encryption and regular audits to safeguard sensitive information.

Benefits of cloud servers

The choice to use a cloud server will depend on the needs of the organization and its specific application and workload requirements. Some potential benefits of cloud servers include the following:

- Ease of use. An administrator can provision a cloud server and connect other services to that server in a matter of minutes. With a public cloud server, an organization does not need to worry about server installation, maintenance or other tasks that come with owning a physical server.

- Globalization. Public cloud servers can globalize workloads. With a traditional centralized data center, admins and users can still access workloads globally, but network latency and disruptions can reduce performance for geographically distant users. By hosting duplicate instances of a workload in different global regions, organizations can benefit from faster and often more reliable network access.

- Cost and flexibility. Public cloud servers follow a PAYG model. Compared to a physical server and its maintenance costs, this can be cost effective, particularly for workloads that only need to run temporarily or are used infrequently. Cloud servers are often used for temporary workloads, such as software development and testing, and for workloads where resources need to be scaled up or down based on demand. However, depending on the amount of use, the long-term and full-time cost of cloud servers can become more expensive than owning the server outright. Furthermore, a full breakdown of cloud computing expenses is important to avoid hidden costs.

- Enhanced collaboration. With centralized data storage and access, cloud servers facilitate better collaboration among team members. Regardless of their geographical or physical location, multiple users can work on the same documents and projects simultaneously.

- Disaster recovery. Cloud servers come with built-in disaster recovery capabilities, such as automated backups, data replication across multiple locations and failover capabilities to ensure that data can be restored quickly in case of data loss or system failure. This automation removes the need for manual intervention which can be prone to delays and errors.

- Processing power. Cloud servers offer robust processing capabilities, enabling efficient execution of complex computational tasks. High-performance computing enables businesses to run resource-intensive applications and processes without the need for in-house hardware investments.

Challenges and considerations of cloud servers

The choice to use a cloud server can also pose some potential disadvantages for organizations. Here are some of the key challenges:

- Regulation and governance. Regulatory obligations and corporate governance standards might prohibit organizations from using cloud servers and storing data in certain geographic locations -- often outside of the organization's geographic, political or regulatory boundaries.

- Performance. Because cloud servers are typically multi-tenant environments, and an admin has no direct control over those servers' physical location, a VM can be adversely affected by excessive storage or network demands of other cloud servers on the same hardware. This is often referred to as the "noisy neighbor" issue. Dedicated or bare-metal cloud servers can help an organization avoid this problem. In other cases, such problems can be addressed by moving workloads to other resources, availability zones or regions.

- Outages and resilience. Cloud servers are subject to periodic and unpredictable service outages, usually due to a fault within the provider's environment or an unexpected network disruption. For this reason, and because a user has no control over a cloud provider's infrastructure, some organizations choose to keep mission-critical workloads within their local data center rather than in the public cloud. There is no inherent high availability or redundancy in public clouds. Users that require greater availability for a workload must deliberately architect that availability into the cloud environment assembled to host the workload.

- Lack of expertise. Organizations can sometimes struggle with a shortage of skilled personnel who are knowledgeable about cloud technologies. This skills gap can hinder effective management and optimization of cloud resources.

- Security and lack of control. Data security is a critical challenge in the adoption of cloud servers. Relying on third-party providers to handle sensitive information raises valid concerns about unauthorized access, data breaches and cyber threats. While cloud service providers offer built-in security features, users must also take proactive measures to safeguard their data.

- Bandwidth sharing. Most virtual cloud servers operate in a multi-tenant environment, where multiple users share the same physical resources. This can lead to competition for bandwidth as data from different users is hosted on the same infrastructure and organizations might worry about performance issues due to this shared setup.

When organizations are evaluating the use of cloud servers to satisfy their compute needs, there are a few key considerations:

- Virtual cloud servers vs. physical servers. Although virtual cloud servers can be convenient, easy to manage and budget-friendly, their use is indicated more for highly variable workloads than data-intensive workloads. Generally, physical servers are more customizable and powerful than virtual servers.

- Types of virtualization. Though hypervisor-assisted virtualization is the most common, there are other types of server virtualization, such as hardware, hardware-assisted, paravirtualization and operation system-level.

- Security. Security remains a major concern for cloud technology. Providers should leave no stone unturned when it comes to ensuring they have the right security options in place for protecting their virtual servers. Major providers rely on carefully refined security policies and multi-layered monitoring and defenses to bolster security.

When considering any type of cloud service, organizations should examine the specific cloud servers the provider uses, such as the type, configuration and virtualization technology.

How to choose a cloud server

Choosing the right cloud server is crucial for ensuring that business operations run smoothly and efficiently.

Here are some key steps to consider when selecting a cloud service provider:

- Evaluate the business requirements. Companies should start by evaluating their specific requirements such as the type of applications that are being used, the amount of storage required and the expected traffic levels. It's also important to evaluate whether the company's workloads are variable or data-sensitive because cloud platforms are better suited for variable workloads.

- Research the security track record of the provider. Security should be at the top of the list when assessing a cloud provider. Companies should investigate the security track record of the provider and ensure that they offer robust security measures such as data encryption, access controls and compliance with industry standards and regulations.

- Consider scalability. Businesses should look for a provider that offers easy scalability. It's important to ensure that the selected cloud provider can accommodate a growing business without significant downtime or additional costs.

- Check performance and reliability factors. The provider's performance metrics, such as uptime guarantees and latency levels, should be carefully evaluated. A dependable cloud service provider, for instance, should ensure high availability, ideally exceeding 99.5% uptime, to minimize disruptions to operations.

- Review pricing models. It's important to understand the pricing structure of the cloud service provider. Organizations should look for transparency in costs and consider whether the pricing aligns with the company's budget. For instance, some providers offer PAYG models, while others might offer fixed pricing.

- Examine customer support. Good customer support is essential for prompt issue resolution. Companies should evaluate the available support options that the provider offers including 24/7 assistance, live chat and dedicated account managers. Reading reviews and testimonials can also provide insights into the quality of customer service provided by the potential cloud service provider.

- Consider data center locations. It's important to consider the geographical location of the provider's data centers when making the selection since it can affect performance and compliance. It's best to choose a provider with data centers located near the target audience to reduce latency and ensure compliance with local data protection laws.

Private clouds provide various benefits including control over infrastructure and security. Discover the key benefits of private cloud services and learn why they could be a better choice than public clouds.