everythingpossible - Fotolia

Compare AWS Lambda vs. Azure Functions vs. Google Cloud Functions

Trying to find the right serverless service? Evaluate differences in price, language support and deployment models for AWS Lambda, Azure Functions and Google Cloud Functions.

AWS Lambda, Azure Functions and Google Cloud Functions all offer similar functionality and advantages. So, how do you choose the right one for your project?

These three serverless services all include the ability to pay only for the time that a function runs instead of continuously charging for a cloud server whether or not it's active. However, there are some important differences when comparing AWS Lambda, Azure Functions and Google Cloud Functions.

Serverless compute shoppers should focus on the differences to make an informed choice. We take a closer look specifically at these three areas: deployment models, programming language support and pricing.

Deployment models

AWS Lambda deploys all functions in the Lambda environment on servers that run Amazon Linux. Lambda functions can interact with other services on the AWS cloud or elsewhere in numerous ways, but function deployment is limited to the Lambda service.

Google Cloud Functions requires functions to be stored in Google's Container Registry using container images. Functions must also be executed as containers.

Azure Functions, compared to AWS Lambda and Google Cloud Functions, is more flexible and complex about how users deploy serverless functions as part of a larger workload.

Azure Functions users can deploy code directly on the Azure Functions service or run the software inside Docker containers. The latter option gives programmers more control over the execution environment because Azure Functions works with Dockerfiles that define the container environment. These functions packaged inside Docker containers can also be deployed to Kubernetes through integration with Kubernetes Event-driven Autoscaling.

Azure Functions also offers the option to deploy functions to either Windows- or Linux-based servers. In most cases, the host OS should not make a difference. However, if your serverless functions have OS-specific code or dependencies, such as a programming language or library that runs only on Linux, this is an important factor.

Programming language support

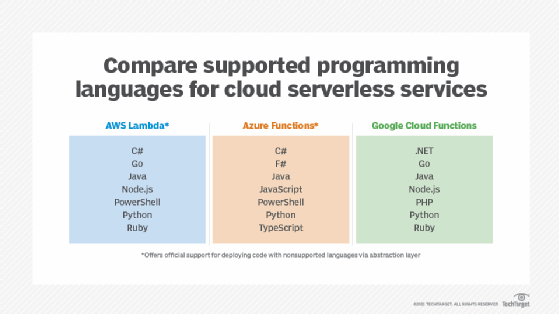

Serverless applications are written in many languages. Programming language support is another difference among AWS Lambda, Azure Functions and Google Cloud Functions. All services can directly execute serverless functions written in Java and Python. Beyond this, however, there are differences. Only Azure Functions supports JavaScript and TypeScript, and only AWS Lambda and Google Cloud Functions support Go and Ruby.

However, it is possible on Azure Functions and AWS Lambda to use virtually any other programming language with the help of an abstraction layer that lets the service execute code in a language it does not natively support.

AWS Lambda does this through custom runtimes. These custom runtimes use binary files that are compiled for Amazon Linux to run code written in a programming language that is not directly supported by AWS Lambda.

Azure Functions uses custom handlers. These custom handlers rely on HTTP primitives to interface with code written in unsupported languages. The Azure Functions approach is a bit more complex for developers to implement, but it is also more flexible.

Google Cloud Functions doesn't provide an official method for executing functions written in languages other than those it directly supports.

Pricing

Each cloud provider charges its serverless users based on the amount of memory that their functions consume and the number of times the functions are invoked. However, there some areas in which serverless costs differ.

Data transfers

AWS charges additional fees for data transfers between AWS Lambda and its storage services if the data moves between different cloud regions. There is no fee if the functions and data storage exist within the same region.

With Azure Functions and Google Cloud Functions, inbound data transfers are always free, although both services do charge for outbound transfers if data moves between cloud regions.

Upgraded offerings

AWS also charges higher rates for Provisioned Concurrency in Lambda. Provisioned Concurrency keeps functions initialized so that they can handle requests more quickly. Rates are based on function memory consumption and execution time.

For users who sign up for the Premium plan, Azure Functions provides additional virtual networking and enhanced function performance over the base offering.

Registries

A final pricing difference involves registries to host serverless functions. In Google Cloud, functions are stored in Google's Container Registry, which is a paid service. Users must, therefore, pay fees to store their functions in the registry, even if their actual function usage remains within the limits of the free tier. The other clouds don't have a required registry storage fee for serverless functions.

These small nuances in serverless pricing can be significant for certain deployments. Teams that use multiple cloud regions might find Azure Functions or Google Cloud Functions more cost-effective because they do not charge for inbound data transfers. The additional features -- beyond concurrency -- that come with the Azure Functions Premium plan may also be attractive for some organizations.