Infrastructure as a Service (IaaS)

What is IaaS?

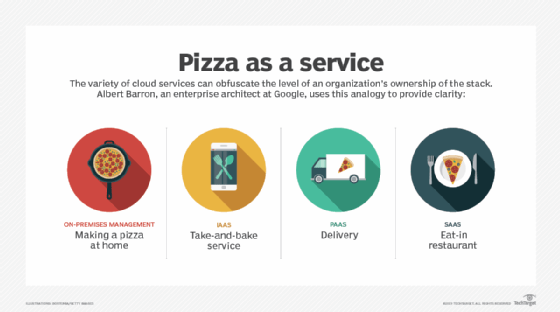

Infrastructure as a service (IaaS) is a form of cloud computing that provides virtualized computing resources over the internet. IaaS is one of the three main categories of cloud computing services, alongside software as a service (SaaS) and platform as a service (PaaS).

In the IaaS model, the cloud provider manages IT infrastructures such as storage, server and networking resources, and delivers them to subscriber organizations via virtual machines accessible through the internet. IaaS can have many benefits for organizations, such as potentially making workloads faster, easier, more flexible and more cost efficient.

IaaS architecture

In an IaaS service model, a cloud provider hosts the infrastructure components that are traditionally present in an on-premises data center. This includes servers, storage and networking hardware, as well as the virtualization or hypervisor layer.

IaaS providers also supply a range of services to accompany those infrastructure components. These can include the following:

- detailed billing;

- monitoring;

- log access;

- security;

- load balancing;

- clustering; and

- storage resiliency, such as backup, replication and recovery.

These services are increasingly policy-driven, enabling IaaS users to implement greater levels of automation and orchestration for important infrastructure tasks. For example, a user can implement policies to drive load balancing to maintain application availability and performance.

How does IaaS work?

IaaS customers access resources and services through a wide area network (WAN), such as the internet, and can use the cloud provider's services to install the remaining elements of an application stack. For example, the user can log in to the IaaS platform to create virtual machines (VMs); install operating systems in each VM; deploy middleware, such as databases; create storage buckets for workloads and backups; and install the enterprise workload into that VM. Customers can then use the provider's services to track costs, monitor performance, balance network traffic, troubleshoot application issues and manage disaster recovery.

Any cloud computing model requires the participation of a provider. The provider is often a third-party organization that specializes in selling IaaS. Amazon Web Services (AWS) and Google Cloud Platform (GCP) are examples of independent IaaS providers. A business might also opt to deploy a private cloud, becoming its own provider of infrastructure services.

What are the advantages of IaaS?

Organizations choose IaaS because it is often easier, faster and more cost-efficient to operate a workload without having to buy, manage and support the underlying infrastructure. With IaaS, a business can simply rent or lease that infrastructure from another business.

IaaS is an effective cloud service model for workloads that are temporary, experimental or that change unexpectedly. For example, if a business is developing a new software product, it might be more cost-effective to host and test the application using an IaaS provider.

Once the new software is tested and refined, the business can remove it from the IaaS environment for a more traditional, in-house deployment. Conversely, the business could commit that piece of software to a long-term IaaS deployment if the costs of a long-term commitment are less.

In general, IaaS customers pay on a per-user basis, typically by the hour, week or month. Some IaaS providers also charge customers based on the amount of virtual machine space they use. This pay-as-you-go model eliminates the capital expense of deploying in-house hardware and software.

When a business cannot use third-party providers, a private cloud built on premises can still offer the control and scalability of IaaS -- though the cost benefits no longer apply.

Enterprises’ infrastructure management responsibilities change, depending on whether they choose an on-premises, IaaS, PaaS or SaaS deployment.

What are the disadvantages of IaaS?

Despite its flexible, pay-as-you-go model, IaaS billing can be a problem for some businesses. Cloud billing is extremely granular, and it is broken out to reflect the precise usage of services. It is common for users to experience sticker shock -- or finding costs to be higher than expected -- when reviewing the bills for every resource and service involved in application deployment. Users should monitor their IaaS environments and bills closely to understand how IaaS is being used and to avoid being charged for unauthorized services.

Insight is another common problem for IaaS users. Because IaaS providers own the infrastructure, the details of their infrastructure configuration and performance are rarely transparent to IaaS users. This lack of transparency can make systems management and monitoring more difficult for users.

IaaS users are also concerned about service resilience. The workload's availability and performance are highly dependent on the provider. If an IaaS provider experiences network bottlenecks or any form of internal or external downtime, the users' workloads will be affected. In addition, because IaaS is a multi-tenant architecture, the noisy neighbor issue can negatively impact users' workloads.

IaaS vs. SaaS vs. PaaS

IaaS is only one of several cloud computing models and can be complemented by combining it with PaaS and SaaS.

PaaS builds on the IaaS model because, in addition to the underlying infrastructure components, providers host, manage and offer operating systems, middleware and other runtimes for cloud users. While PaaS simplifies workload deployment, it also restricts a business's flexibility to create the environment that it wants.

With SaaS, providers host, manage and offer the entire infrastructure, as well as applications, for users. SaaS users do not need to install anything; they simply log in and use the provider's application, which runs on the provider's infrastructure. Users have some ability to configure the way that the application works and which users are authorized to use it, but the SaaS provider is responsible for everything else.

What are IaaS use cases?

IaaS can be used for a broad variety of purposes. Compute resources that it delivers through a cloud model can be used to fit a variety of use cases. The most common use cases for IaaS deployments include the following:

- Testing and development environments. IaaS offers organizations flexibility when it comes to different test and development environments. They can easily be scaled up or down according to needs.

- Hosting customer-facing websites. this can make it more affordable to host a website, compared to traditional means of hosting websites.

- Data storage, backup and recovery. IaaS can be the easiest and most efficient way for organizations to manage data when demand is unpredictable or might steadily increase. Furthermore, organizations can circumvent the need for extensive efforts focused on the management, legal and compliance requirements of data storage.

- Web applications. infrastructure needed to host web apps is provided by IaaS. Therefore, if an organization is hosting a web application, IaaS can provide the necessary storage resources, servers and networking. Deployments can be made quickly, and the cloud infrastructure can be easily scaled up or down according to the application's demand.

- High-performance computing (HPC). certain workloads may demand HPC-level computing, such as scientific computations, financial modeling and product design work.

- Data warehousing and big data analytics. IaaS can provide the necessary compute and processing power to comb through big data sets.

Major IaaS vendors and products

There are many examples of IaaS vendors and products. IaaS products offered by the three largest public cloud service providers -- Amazon Web Services (AWS), Google, and Microsoft -- include the following:

- AWS offers storage services such as Simple Storage Service (S3) and Glacier, as well as compute services, including its Elastic Compute Cloud (EC2).

- Google Cloud Platform (GCP) offers storage and compute services through Google Compute Engine.

- Microsoft Azure Virtual Machines offers cloud virtualization for many different cloud computing purposes.

This is just a small sample of the broad range of services offered by major IaaS providers. Services can include serverless functions, such as AWS Lambda, Azure Functions or Google Cloud Functions; database access; big data compute environments; monitoring; logging; and more.

There are also many other smaller, or more niche players in the IaaS marketplace, including these products:

- Rackspace Managed Cloud;

- IBM Cloud Private;

- IBM Cloud Virtual Servers;

- CenturyLink Cloud;

- DigitalOcean Droplets;

- Alibaba Elastic Compute Service;

- Alibaba Cloud Elastic High Performance Computing (E-HPC); and

- Alibaba Elastic GPU Service (EGS).

Users will need to carefully consider the services, reliability and costs before choosing a provider -- and be ready to select an alternate provider and to redeploy to the alternate infrastructure if necessary.

How do you implement IaaS?

When looking to implement an IaaS product, there are important considerations to make. The IaaS use cases and infrastructure needs should be strictly defined before different technical requirements and providers should be considered. Technical and storage needs to consider for implementing IaaS include:

- Networking. When focusing on cloud deployments, organizations need to ask certain questions to make sure that the provisioned infrastructure in the cloud can be accessed in an efficient manner.

- Storage. Organizations should consider requirements for storage types, required storage performance levels, possible space needed, provisioning and potential options such as object storage.

- Compute. Organizations should consider the implications of different server, VM, CPU and memory options that cloud providers can offer.

- Security. Data security should be of paramount importance when evaluating cloud services and providers. Questions about data encryption, certifications, compliance and regulation, and secure workloads should be pursued in detail.

- Disaster recovery. Disaster recovery features and options are another key value area for organizations in the event of failover on VM, server or site levels.

- Server Size. Options for server and VM sizes, how many CPUs can be placed onto servers, and other CPU and memory details.

- Throughput of the network. Speed between VMs, data centers, storage, and internet.

- General manageability. How many features of the IaaS can the user control, which parts do you need to control and how easy are they to control and manage?

During the implementation process, organizations should closely consider how the technical and service offerings of different providers fulfill business-side needs, as well as the business's own specific usage requirements. The market for IaaS vendors should be carefully evaluated; with considerable variance of capabilities within products, some may better align with business needs than others.

Once a vendor and product are decided, it is important to negotiate all service-level agreements. Thorough negotiation with the vendor will make it less likely for your organization to be negatively affected by fine-print details that were previously unknown.

Furthermore, an organization should thoroughly assess the capabilities of its IT department to determine how well equipped it is to deal with the ongoing demands of IaaS implementation. In the IaaS model, in-house developers are responsible for the infrastructure's technical maintenance -- including software patches, upgrades and troubleshooting. This personnel assessment is needed to ensure that the organization is equipped to maximize value on all fronts from an IaaS implementation.