What is the zero-trust security model?

The zero-trust security model is a cybersecurity approach that denies access to an enterprise's digital resources by default and grants authenticated users and devices tailored, siloed access to only the applications, data, services and systems they need to do their jobs. Gartner has predicted that by 2025, 60% of organizations will embrace a zero-trust security strategy.

This guide goes in-depth into the origins of zero trust, its principles, the technology and products that enable a zero-trust model, as well as how to implement and manage it.

What is zero trust?

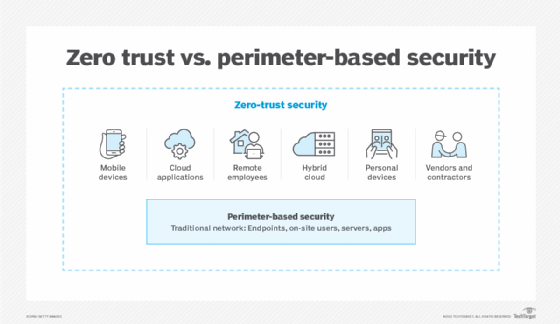

Historically, enterprises have relied on a castle-and-moat cybersecurity model, in which anyone outside the corporate network perimeter is suspect and anyone inside gets the benefit of the doubt. The assumption that internal users are inherently trustworthy, known as implicit trust, has resulted in many costly data breaches, with attackers able to move laterally throughout the network if they make it past the perimeter.

Instead of focusing on user and device locations relative to the perimeter -- i.e., inside or outside the private network -- the zero-trust model grants users access to information based on their identities and roles, regardless of whether they are at the office, at home or elsewhere.

In zero trust, authorization and authentication happen continuously throughout the network, rather than just once at the perimeter. This model restricts unnecessary lateral movement between apps, services and systems, accounting for both insider threats and the possibility that an attacker might compromise a legitimate account. Limiting which parties have privileged access to sensitive data greatly reduces opportunities for hackers to steal it.

The concept of zero trust has been around for more than a decade, but it continues to evolve and grow. John Kindervag, a Forrester analyst at the time, introduced the revolutionary security model in 2010. Shortly thereafter, vendors such as Google and Akamai adopted zero-trust principles internally, before eventually rolling out commercially available zero-trust products and services.

Why is a zero-trust model important?

Zero trust interest and adoption have exploded in recent years, with a plethora of high-profile data breaches driving the need for better cybersecurity, and the global COVID-19 pandemic spurring unprecedented demand for secure remote access technologies.

Traditionally, enterprises relied on technologies such as firewalls to build fences around corporate networks. In this model, an off-site user can access resources remotely by logging into a VPN, which creates a secure virtual tunnel into the network. But problems arise when VPN login credentials fall into the wrong hands, as happened in the infamous Colonial Pipeline data breach.

In the past, relatively few users required remote access, with most employees working on site. But enterprises now need to support secure remote access at scale, magnifying the risks associated with VPN use.

Additionally, the perimeter-based model was designed for a time when an organization's resources resided locally in an on-premises corporate data center. Now most enterprises' resources lie scattered across private data centers and multiple clouds, diffusing the traditional perimeter.

In short, the legacy approach to cybersecurity is becoming less effective, less efficient and more dangerous. In contrast to perimeter-based security, zero trust lets enterprises securely and selectively connect users to applications, data, services and systems on a one-to-one basis, whether the resources live on premises or in the cloud and regardless of where users are working.

Zero trust adoption can offer organizations the following benefits:

- protection of sensitive data;

- support for compliance auditing;

- lower breach risk and detection time;

- visibility into network traffic; and

- better control in cloud environments.

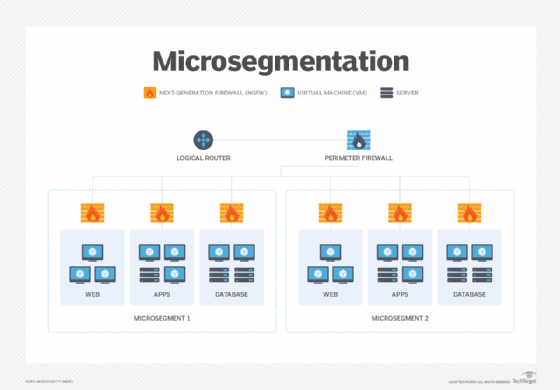

A zero-trust model also includes microsegmentation -- a fundamental principle of cybersecurity. Microsegmentation enables IT to wall off network resources in discrete zones, containing potential threats and preventing them from spreading laterally throughout the enterprise. With zero-trust microsegmentation, organizations can apply granular, role-based access policies to secure sensitive systems and data, preventing an access free-for-all and limiting potential damage.

In a 2021 action that may put the federal government in the lead in terms of zero trust deployment, the White House issued an executive order calling on federal agencies to move toward a zero-trust security strategy, citing cloud adoption and the inevitability of data breaches as key drivers. Later that year, the U.S. Office of Management and Budget (OMB) published a draft strategy for executing on the presidential directive, and the Cybersecurity and Infrastructure Security Agency (CISA) released additional guidance in its Cloud Security Technical Reference Architecture and Zero Trust Maturity Model (ZTMM).

How does ZTNA work?

A major element of the zero-trust model, zero-trust network access (ZTNA) applies zero-trust concepts to an application access architecture.

In ZTNA, a controller or trust broker enforces an organization's preestablished access policies by facilitating or denying connections between users and apps, while hiding the network's location (i.e., IP address). The software authenticates users based on their identities and roles, as well as by contextual variables such as device security postures, times of day, geolocations and data sensitivity. Suspicious context could prompt a ZTNA broker to deny even an authorized user's connection request.

Once authenticated and connected, users can see only the applications they are authorized to access; all other network resources remain hidden.

Planning for zero trust

Experts agree that a zero-trust approach is critical in theory but often difficult to implement in practice. Organizations planning to embrace a zero-trust model should bear in mind the following challenges:

- Piecemeal adoption can leave security gaps. Because implicit trust is so ingrained in the traditional IT environment, it is virtually impossible to transition to a zero-trust framework overnight. Rather, implementation is almost always piecemeal, which can result in growing pains and security gaps.

- It can cause friction with legacy tech. Zero-trust tools may not always play nicely with legacy technology, creating technical headaches and potentially requiring major architectural, hardware and software overhauls.

- There are no easy answers. Because zero trust isn't a single product or technology, but instead an overarching strategy encompassing the entire IT environment, there's no simple path to full adoption.

- It's only as good as its access control. A zero-trust strategy hinges on identity and access control, requiring near-constant administrative updates to user identities, roles and permissions to be effective.

- It can hurt productivity. Zero trust's goal is to restrict user access as much as possible without unduly hindering the business. But overzealous policies can block users from resources they need, hampering productivity.

Explore how to negotiate these and other zero-trust challenges by running trials, starting small and scaling slowly.

Enterprises planning zero-trust transitions should also consider creating dedicated, cross-functional teams to develop strategies and drive implementation efforts. Ideally, a zero-trust team will include members with expertise in the following areas:

- applications and data security;

- network and infrastructure security;

- user and device identity; and

- security operations.

Team members can fill any knowledge gaps and gain specialized expertise via a variety of zero-trust training courses and certifications from organizations such as Forrester, (ISC)2, SANS and the Cloud Security Alliance.

Zero-trust use cases

As with any new technology, use cases should drive zero-trust adoption decisions. The following are four clear examples of how zero trust can help protect the enterprise:

- Secure third-party access

- Secure multi-cloud remote access

- IoT security and visibility

- Data center microsegmentation

What are the principles of a zero-trust model?

The zero-trust framework lays out a set of principles to remove inherent trust and ensure security using continuous verification of users and devices.

The following are five main principles of zero trust:

- Know your protect surface.

- Understand the security controls already in place.

- Incorporate new tools and modern architecture.

- Apply detailed policy.

- Monitor and alert.

The continuous aspect of zero trust also applies to the principles themselves. Zero trust isn't a set-it-and-forget-it strategy. Principles must be addressed via a continuous process model that restarts once a principle is achieved.

Zero trust vs. other technologies

The cybersecurity industry is rife with technologies, strategies and policies. It can be difficult to keep track of what's what. This is especially true in zero trust, which is not a technology but a framework of principles and technologies that apply those principles.

Let's look at how zero trust and other terms compare.

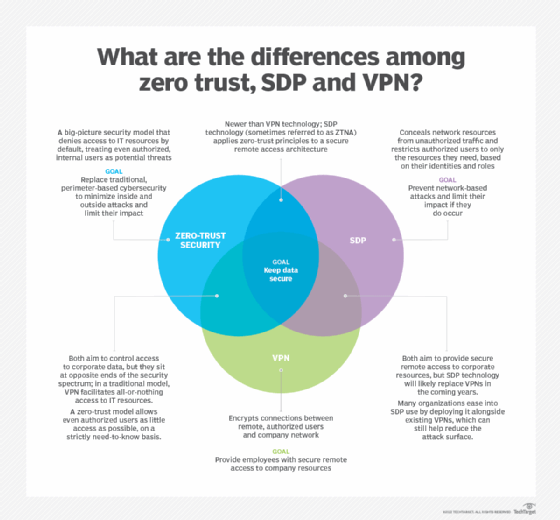

Zero trust vs. SDP

Like zero trust, a software-defined perimeter (SDP) aims to improve security by strictly controlling which users and devices can access what. Unlike zero trust, SDP is an architecture comprised of SDP controllers and hosts that control and facilitate communications.

Many experts and vendors use the terms zero trust and SDP interchangeably. That said, the terms are evolving, and some now refer to ZTNA as SDP 2.0.

Zero trust vs. VPN

Zero trust and VPNs both share the goal of ensuring security, but the efficacy of legacy perimeter security technology has come under scrutiny over the past decade.

VPNs, which have long been used to connect remote users and devices to corporate networks, have faced difficulty securing increasing numbers of remote workers and cloud services used in the modern enterprise. Zero trust is expected to supplant aging VPN technology because it can better secure perimeter-less enterprises.

Don't tear out those VPNs yet, however. Zero trust and VPNs can be used in tandem. For example, zero-trust microsegmentation, in conjunction with a VPN, can reduce a company's attack surface -- although not as much as a full zero-trust initiative would -- and can prevent damaging lateral movements and attacks, should a breach occur.

Zero trust vs. zero-knowledge proof

While they may sound the same, zero trust and zero-knowledge proof overlap only slightly in terms of technology.

Zero-knowledge proof is a methodology that can be used when one party wants to prove the validity of information to a second party without sharing any of the information. Cryptographic algorithms based on zero-knowledge proof enable the proving party to mathematically show the information is true.

Zero-knowledge proofs can be used to authenticate users without divulging their identities. Some two-factor (2FA) and multifactor authentication (MFA) methods use zero-knowledge proofs. The overlap with zero trust is that 2FA and MFA are critical technologies in a zero-trust strategy.

Zero trust vs. principle of least privilege

The principle of least privilege (POLP) is a security concept that gives users and devices only the access rights required to do their jobs and nothing more. This includes access to data, applications, systems and processes. If a device's or user's credentials are compromised, least privilege access ensures a malicious actor can only access what that user has permission to access and not necessarily the entire network.

Zero trust and POLP are similar in that they both restrict user and device access to resources. Unlike POLP, zero trust also focuses on user and device authentication and authorization. Zero-trust policies are often based on POLP, yet they continuously reverify authentication and authorization.

Zero trust vs. defense in depth

A defense-in-depth security strategy involves multiple layers of processes, people and technologies to protect data and systems. The belief is that a layered security approach protects against human-caused misconfigurations and ensures most gaps between tools and policies are covered.

Defense in depth may be stronger than zero trust, in that if one layer of security fails, other layers pick up the slack and protect the network. Zero trust is often more appealing, however, because its never-trust, always-verify stance ensures that if attackers infiltrate a network, they won't be there for long before they need to be reverified, and that zero-trust microsegmentation will limit what they can access.

Including defense-in-depth principles in a zero-trust framework can make the security strategy even stronger.

How to 'buy' zero trust

Vendor messaging around zero trust can be confusing -- even downright incorrect. No one-size-fits-all, out-of-the-box zero-trust product or suite of products exists. Rather, zero trust is the overarching strategy involving a collection of tools, policies and procedures that build a strong barrier around workloads to ensure data security.

That said, several existing zero-trust-enabled products are available to include in a zero-trust deployment. ZTNA offerings make up many of these products. In order to qualify as a true ZTNA product, it must be identity-centric, have default "deny" responses and be context-aware.

ZTNA has two basic architectures:

- Endpoint-initiated ZTNA. Also known as agent-based ZTNA, this involves deploying software agents on each network endpoint. A broker decides, based on policy, if a user or device can access a resource after which, if allowed, a gateway initiates a session to permit access.

- Service-initiated ZTNA. Also called clientless ZTNA, this involves using a connector appliance in an organization's private network that initiates connections to the ZTNA provider's cloud. A ZTNA controller initiates a session if the user and device meet policy requirements.

Enterprises can opt for as-as-service offerings or self-hosted ZTNA deployments. ZTNA as a service is popular due to its scalability and manageability. On the other hand, self-hosted ZTNA can offer organizations greater control.

Learn more about the ZTNA market, including questions to ask when evaluating potential vendors and a list of products available today.

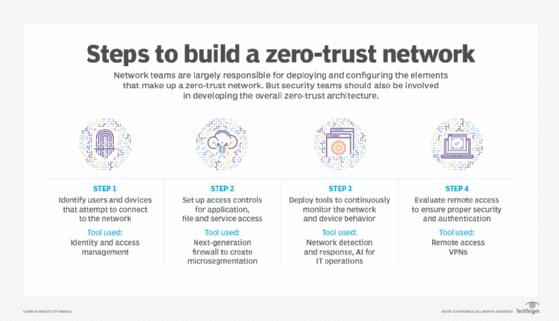

Steps to implement zero trust

A successful zero-trust implementation requires considerations around what the Forrester Zero Trust eXtended (ZTX) model coined as the "seven pillars of zero trust":

- Workforce security

- Device security

- Workload security

- Network security

- Data security

- Visibility and analytics

- Automation and orchestration

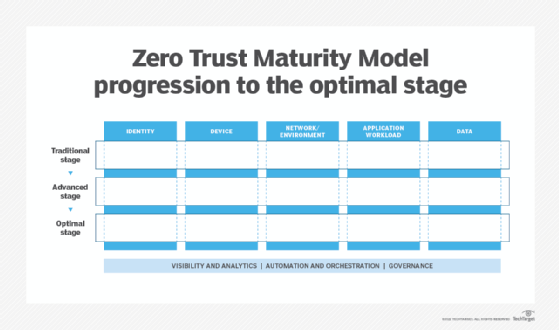

OMB, in its 2021 document complementing the executive order requiring federal agencies achieve zero-trust goals by the end of 2024, and CISA, in its ZTMM, align with the ZTX pillars, adding "governance" as an eighth pillar.

ZTX and ZTMM are just two approaches to adopting zero trust. Both aim to help organizations establish and execute on a zero-trust strategy. While ZTX is for any organization, the ZTMM was designed for federal agencies, although any company can use the information to implement a strategy.

The CISA ZTMM also outlines the three layers of zero-trust adoption:

- Traditional zero-trust architecture

- Advanced zero-trust architecture

- Optimal zero-trust architecture

Once an organization is ready to adopt zero trust, it is highly beneficial to approach it in phases. The following are seven steps to implement zero trust:

- Form a dedicated zero-trust team. Zero trust is a team sport. Choosing the right team members may mean the difference between success and hardship. For example, when deciding who manages zero-trust deployments, consider who has the most expertise in that specific area. Security teams often develop and maintain a zero-trust strategy. But if deploying zero trust across networking-specific areas -- such as managing and configuring network infrastructure tools and services, including switches, routers, firewalls, VPNs and network monitoring tools -- then the networking team should take charge.

- Choose a zero-trust implementation on-ramp. An organization generally approaches zero trust at one particular on-ramp. The three on-ramp options are user and device identity, applications and data, and the network.

- Assess the environment. Review the controls already in place where zero trust is being deployed, as well as the level of trust the controls provide and what gaps need to be filled. Many organizations may be surprised to hear they have pieces of the zero-trust puzzle already in place. Organizations should start by comparing their current security strategy with this zero-trust cybersecurity audit checklist, based on the ZTMM. It will unveil what zero-trust processes are already in place and where gaps exist that need addressing.

- Review the available technology. Review the technologies and methodologies needed to build out the zero-trust strategy.

- Launch key zero-trust initiatives. Compare the assessment with the technology review, then launch the zero-trust deployment.

- Define operational changes. Document and assess any changes to operations. Modify or automate processes where necessary.

- Implement, rinse and repeat. As zero-trust initiatives are put into place, measure their effectiveness and adjust as needed. Then, start the process all over again.

Read more on the zero-trust on-ramps and implementation steps.

Remember: Zero trust is a journey, not a destination. Run trials, start small and then scale deployments. It takes a lot of planning and teamwork, but in the end, a zero-trust security model is one of the most important initiatives an enterprise can adopt, even if it hits bumps along the way.