carloscastilla - Fotolia

Compare managed Kubernetes services from AWS and Azure

AWS and Azure have both rolled out a managed service for Kubernetes, and while their overall aim is the same, there are key differences users should note.

The focus container product development has shifted from the runtime engine and image format, where Docker quickly won, to workload orchestration and cluster management, where Kubernetes has become the standard.

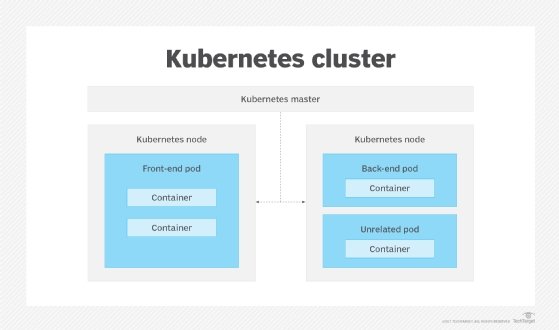

Kubernetes, however, presents a steep learning curve and can be complex to manage. Cloud platforms, such as Amazon Web Services (AWS) and Microsoft Azure, reduce the complexity of running a Kubernetes cluster. But, until recently, these platforms still required users to install and manage the underlying compute instances, as well as cluster management software.

Now, the three major cloud providers offer fully managed Kubernetes services that eliminate this overhead. Amazon Elastic Container Service for Kubernetes (EKS) and Azure Container Service (AKS) have joined the previously released Google Kubernetes Engine (GKE), as well as third-party services, like Platform9 and StackPointCloud, as the most convenient ways to deploy and operate production-grade Kubernetes clusters.

But how do those new offerings compare? Here's a look at the key differences between Amazon EKS and Microsoft's AKS and some guidance to choose between these two managed Kubernetes services.

AKS overview

AKS differs from the prior version of Azure Container Service in that Azure runs the entire Kubernetes control plane, providing self-healing clusters, single-click scaling and a pretested repository of Kubernetes versions that users can install with a one-line command.

AKS, overall, has a simple command structure. For example, users can create a Kubernetes cluster with a single command:

az aks create –n myCluster –g myResourceGroup

The service makes it easier to change Kubernetes versions or scale the number of cluster nodes. Since admins need to manually configure and change the number of nodes via the Azure command-line interface or portal, a test AKS installation might start with a single node for all pods -- a group of containers with a shared configuration, storage and network.

While AKS does not currently support autoscaling for the number of agent nodes in a cluster, it does support horizontal pod autoscaling using CPU requests, as well as limits in the pod configuration. Similarly, although AKS won't automatically update the Kubernetes version in a cluster, users can select from several pretested releases. This allows you to update a cluster installed with version 1.7.7 to 1.8.1 or 1.8.2 with a single command:

az aks upgrade --name myK8sCluster --resource-group \ myResourceGroup --kubernetes-version 1.8.2

AKS is still in preview and is available in five Azure regions: Central US, East US, Canada Central, Canada East and West Europe. Each Azure account can have a maximum of five clusters, with each cluster limited to 250 nodes and a maximum of 110 pods per node.

The Kubernetes cluster management feature of AKS is free; users pay only for the VM instances, storage and networking resources they use. The Azure cluster cost estimator is the easiest way to price a deployment.

Amazon EKS overview

AWS EKS as a preview service at re:Invent 2017, and like AKS, it manages and scales Kubernetes clusters. But unlike AKS, EKS is a limited availability preview, so there's no publicly available documentation, pricing or access to the management UI.

While we don't know all the details about EKS, it does have significant architectural and feature differences compared to AKS. Most importantly, EKS is inherently resilient to major infrastructure failures, as it automatically distributes Kubernetes master schedulers and controllers across three availability (AZs). If you lose a master scheduler or an entire AZ, it won't affect the remaining clusters or cause applications to fail.

EKS also detects and replaces masters that fail health checks. In addition, unlike AKS, admins can configure EKS to automatically patch and upgrade clusters to the latest Kubernetes minor version -- though major version upgrades still require manual initiation.

Since EKS is based on open source Kubernetes -- currently version 1.7, with planned support for the three most recent versions -- it is, like AKS, compatible with existing plug-ins, scripts and configurations. This also means that an application that runs on existing Kubernetes clusters, whether on premises or hosted, should work without modification when migrated to AWS. Like other cloud-based container services, EKS workloads and infrastructure can use other Amazon services, such as Elastic Load Balancer, Identity and Access Management and CloudTrail.

Decision criteria for managed Kubernetes services

Both AWS and Azure are playing catch-up in the market for managed container services, and both their offerings are relatively untested. Not surprisingly, given Google's history with container technology, both EKS and AKS lack features available in GKE, such as instance autoscaling, the use of mixed instance types within a cluster and automated workload scheduling. A primary reason organizations adopt containers is to improve portability across public and private clouds. Since AKS, EKS and GKE are all based on open source Kubernetes, it lowers the barrier to moving workloads around in a hybrid cloud. Still, each has unique configuration details and cloud service interfaces, which can hinder workload migration. To mitigate these challenges, use a cloud-agnostic Kubernetes manager, such as Platform9 or StackPointCloud -- especially if you want to use Kubernetes in a multi-cloud environment. Otherwise, stick with managed Kubernetes services from your preferred cloud provider -- granted, AWS users have to wait before being able to run production workloads.