How to calculate cloud migration costs before you move

Here's a primer on how to calculate the total cost of a cloud migration and compare your on-premises expenses to what you'll spend on the move.

Determining the cost of a cloud migration isn't easy. Not only do businesses need to account for the differences between on-premises and cloud prices, they also must consider a variety of other factors -- many of which are often overlooked.

Some costs are easy to predict. For example, the cost of migrating data from on-premises storage to cloud-based object storage is straightforward. Other migration costs, like those incurred from workload refactoring, are more difficult to nail down. It's also easy to overlook expenses associated with things like staffing and deploying new types of services.

Factor in the following costs to make sure a cloud migration will be financially beneficial.

Calculate on-premises costs

The first step to calculate cloud migration costs begins before you move anything to the cloud. Admins need to assess the cost of existing hardware and software assets, then evaluate how it compares to a cloud-based environment.

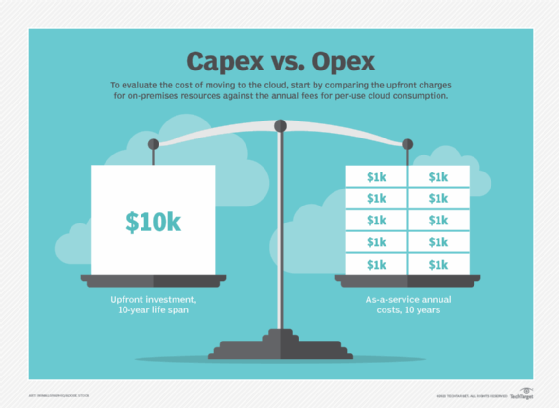

The challenge in this comparison is that cost models for most on-premises software and hardware are different from cloud pricing models. On-premises computing requires a significant upfront capital investment to purchase hardware and follows a Capex-based cost model. On the other hand, cloud resources rarely require capital expenditure and follow an Opex-based model. Customers pay for IaaS-based virtual hardware and SaaS applications as they consume them.

This article is part of

What is cloud migration? Essential guide to moving to the cloud

This means you'll need to express your on-premises Capex cost in a way that allows you to compare it to Opex costs in the cloud. To do so, divide the upfront cost of your on-premises resources by the amount of time you can reasonably expect to use them.

These estimates aren't exact. They don't account for costs such as replacing the server's hard disks, which may not last as long as the server itself. It also does not factor in the potential for hardware upgrades, like the addition of memory, that could extend the server's lifecycle. Still, this approach helps you establish a baseline estimate of the total cost of your on-premises environment, then compare it to the cost of equivalent services in the cloud.

You also need to identify on-premises resources that you won't pay for in the cloud. For example, network switches for your on-premises data center are unnecessary when you move workloads to a public cloud. Uninterruptible power supply units and network-attached storage devices are also examples of equipment that you can decommission when you move to the cloud.

Certain on-premises operating expenses, such as electricity and physical site security, also disappear post-migration.

Refactoring considerations

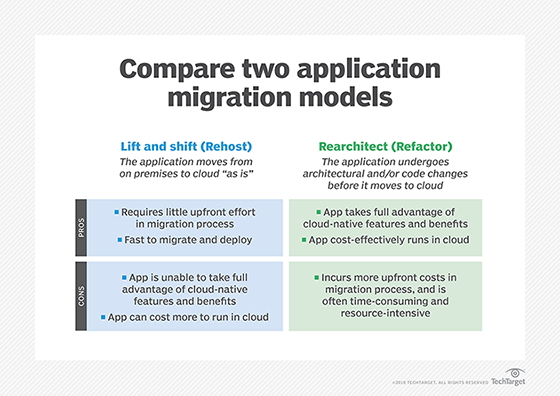

In the simplest scenario, admins will take applications that currently run in on-premises VMs, as well as data housed in scale-out on-premises storage, and move them to public cloud compute and storage services. In this case, workloads won't require any major refactoring, and the cloud services will have relatively simple pricing models. Migration costs are easy enough to calculate.

On the other hand, your cloud migration plan might not be to simply lift and shift your workloads into the cloud, but may also entail the transformation of those workloads. In these cases, cloud migration will require more development effort to modify your workloads.

For example, you might run your applications inside VMs but want to migrate some to containers and serverless functions. Or, you have monolithic applications that you plan to refactor as microservices. These modifications can be expensive. Using a more complex menu of cloud services often requires more expertise to administer effectively, which can also translate to more expenses.

Calculate cloud costs

After you estimate the cost of your on-premises environment, you can calculate the costs of the cloud environment you plan to build and compare the two.

Virtually all your cloud spending will be on operating expenses that are billed monthly. However, calculating cloud costs is difficult because there are so many variables. Each cloud vendor has a different pricing schedule for each of its services.

Many prices depend on which region you use and how many resources you consume. You'll pay a lower per-gigabyte rate for cloud storage costs at high volumes than you would when storing just a few gigabytes, for instance. Cloud service prices also vary depending on whether you reserve the resources ahead of time or consume them as you go.

Cloud cost calculators

The best way to calculate cloud costs is to use a calculator tool designed for this purpose. All the major cloud vendors have their own calculators, such as:

- AWS Pricing Calculator

- Microsoft Azure Pricing Calculator

- Google Cloud Pricing Calculator

There are also additional tools -- like the Azure Total Cost of Ownership (TCO) Calculator -- designed to help estimate the cost difference between your existing, on-premises environment and what you'll pay in the cloud.

These native calculators only work for each vendor's specific cloud. If you're looking for a third-party alternative that will help you estimate or compare costs on multiple clouds, services like Apptio Cloudability and CloudCheckr can help.

However, these platforms aren't cost calculators as much as they are cost-optimization and capacity management tools that support multiple public clouds. They may help you identify the most cost-efficient cloud for your needs, but they won't predict your costs as precisely as one of the cloud vendors' own calculators.

Auxiliary cloud services

A second factor to consider is how many "auxiliary" services you will use when you move to the cloud. Auxiliary services include things like content delivery networks to help distribute your content, availability zones to improve resiliency and DDoS protection. These services are typically important for on-premises workloads, and while not strictly necessary in the public cloud, they are valuable add-ons for enhancing cloud workload security and performance.

While auxiliary services are useful additions, the more you use, the higher the operating and configuration costs will be during and after a cloud migration.

Hidden cloud migration costs

Migrating to the cloud comes with a variety of costs that can be easy to overlook, but they are still critical to anticipate. Don't leave out the following potential cloud migration costs when planning your move:

- Massive data migrations. If you have an exceptionally large volume of data to move to the cloud, the network might be insufficient for the task. You'll have to use a service like AWS Snowmobile, which moves data in 100-petabyte increments using trucks.

- Labor. Your existing IT team may be able to transfer workloads easily enough if they have the requisite skills. If not, you'll need to hire an IT services company that offers cloud migration.

- Consulting. Depending on your level of in-house cloud expertise, you may decide to work with a consulting company that specializes in planning and managing cloud migrations.

- Backup. Although cloud data storage may be more reliable than on-premises storage, you should still back it up, either on premises or to another cloud region or cloud.

Cloud management and administration

Cloud migration costs are also affected by the extent to which your administration and management tools must be overhauled.

Public cloud services usually require configurations like identity and access management policies to manage access control. You may also use tools like AWS Step Functions and AWS Auto Scaling to help automate your cloud workflows. And for large-scale cloud environments, you'll likely want to use infrastructure as code (IaC) tools to automate provisioning and deployment.

In some cases, you can reuse your on-premises configurations and tools during your cloud migration. If you use an IaC tool that works with both on-premises and cloud infrastructure, you can likely take the IaC policies you already have and reuse them in the cloud.

However, setting up other tools and configurations will add to your cloud migration costs. There is typically not an efficient way to migrate on-premises access-control policies into a public cloud, for instance, so that will require an expense.

Orchestration costs

Depending on the nature of your cloud workloads, you may choose to host them using a container orchestration platform like Kubernetes.

Kubernetes adds another cost to your cloud migration plans. If you're not currently using Kubernetes at all, you'll need to set it up, which demands significant time and expense. Even if you already use Kubernetes on premises, don't assume that Kubernetes in the cloud will cost the same. Managed Kubernetes services have complex pricing models that you'll want to study closely to calculate your cloud migration costs.

Infrastructure write-off

When you move to the cloud, you typically have to decommission the infrastructure that hosted your workloads on premises. While this isn't a cost per se, it's worth thinking about how much value you'll be writing off by ceasing to use servers and other infrastructure that have useful life remaining.

If your company spent millions on server hardware two years ago, for example, some amount of that investment will be wasted in your move to the cloud, where you can't use that infrastructure -- unless you choose a hybrid cloud architecture. Exactly how much this write-off will "cost" depends on how much life your hardware still has, and whether you are able to repurpose or resell any of it.