Public cloud metrics and KPI tools your business should know

Metrics and KPIs for public cloud deployments can be overwhelming. Learn how cloud architects can track usage of compute, storage, database resources and more.

Monitoring and management are central elements to any public cloud deployment strategy. Proper management helps an organization ensure that the utilization, performance, cost and even business benefits of the public cloud meet or exceed requirements. Monitoring can often reveal service gaps or problems that an organization should know.

To rectify these issues, cloud architects, administrators and developers need the right tools. Public cloud providers have a selection of tools that offer granular detail tailored to their platforms. There are also third-party offerings that support one or more public clouds. Your chosen tool must be able to deliver an array of useful metrics that support business needs and goals.

Let's outline a range of metrics and parameters that can help businesses oversee public cloud usage, and consider several tools that can gather the desired information.

Metrics vs. KPIs

Before we dive into specific metrics, let's start with a quick distinction between metrics and KPIs. While the terms are sometimes used interchangeably, this is not technically correct.

This article is part of

What is cloud management? Definition, benefits and guide

A metric is a parameter that can be measured directly and tracked over time to determine trends. For example, the request count in a load balancer service and the CPU utilization of a compute instance are metrics.

In comparison, a KPI -- also called a key performance indicator -- typically represents a specialized parameter that is important to business performance or growth. A KPI can be measured directly. For example, the monthly cost of a cloud provider's services might be considered a KPI because it has particular value to the business.

However, KPIs are often more commonly derived or calculated through a combination of metrics. For example, a business KPI such as cost per transaction can't be measured directly from a cloud provider, because your cost per transaction is not relevant to the cloud provider. These details might be calculated from metrics such as the cloud provider's throughput and monthly billing metrics.

Let's first focus on metrics, because these parameters can generally be easily or directly measured from the cloud provider.

Public cloud infrastructure metrics

Public cloud infrastructure is perhaps the most common and objective source of metrics for cloud users. These metrics measure what's happening with the provider's resources and services.

Organizations have frequently complained about a lack of transparency on public clouds, but providers have made strides in these areas in recent years. For example, they now offer access to infrastructure KPIs and metrics through common interfaces such as consoles and APIs.

With infrastructure metrics, businesses can measure resource and service utilization, and determine application availability, performance, health and so on. This makes infrastructure metrics key to effective public cloud management.

Cloud administrators can select from what seems like a bewildering array of data points. While it's not necessary, or even desirable, to monitor every available metric, here are some of the most common infrastructure data points.

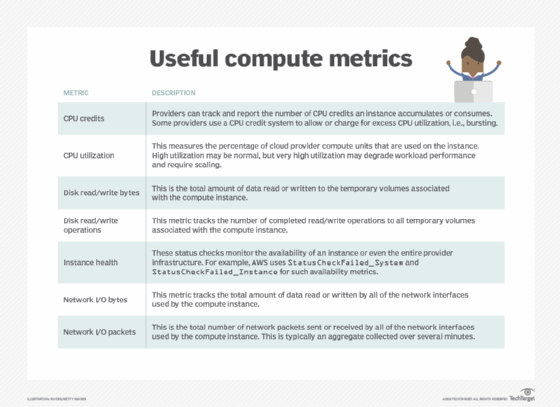

Compute instances

Compute metrics relate to the volume and performance of compute instances within the public cloud, such as Amazon EC2 instances or Microsoft Azure VMs. These metrics can include processor, memory, disk, network, and general health and availability parameters. Such metrics help to determine whether the application within each instance is running and performing adequately.

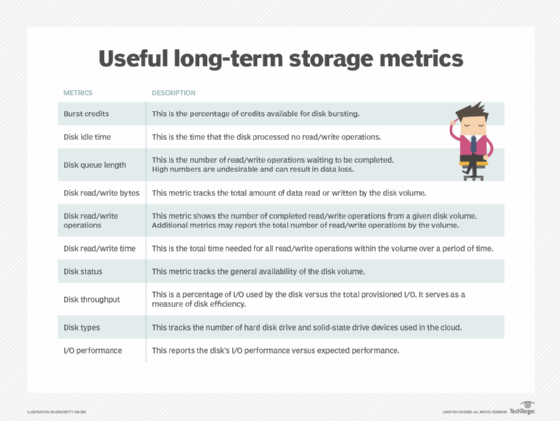

Storage instances

The storage metrics mentioned above are for ephemeral storage tied to the compute instance itself. Long-term storage resources, such as Amazon S3 or Google Cloud Storage, provide a different set of metrics that detail the utilization, performance and status of storage resources, separate from compute instances.

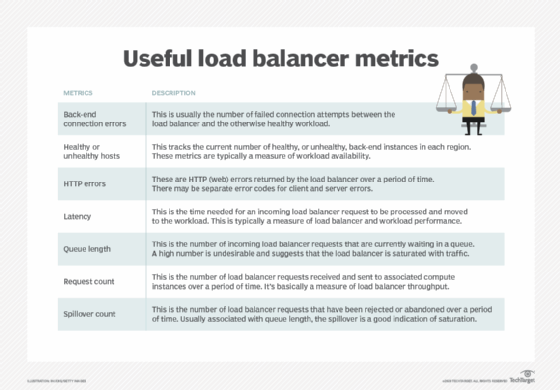

Load balancers

Load balancing services, such as Google Load Balancing and Azure Load Balancer, are used to distribute incoming network traffic from the internet across workloads running in the public cloud, such as on Google Compute Engine instances or Azure VMs, respectively.

For example, businesses often place copies of vital workloads in more than one region to provide better availability while managing latency. Load balancer metrics show the performance of the load balancer itself along with the performance of the back-end workload it serves.

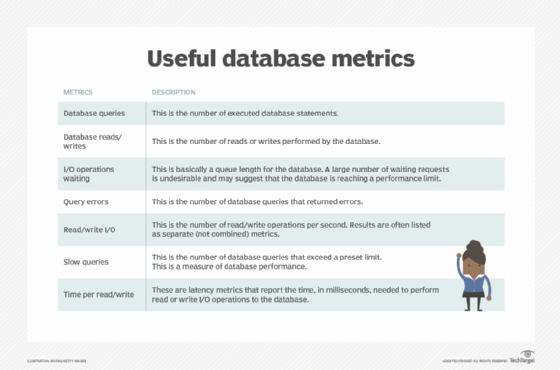

Database instances

IT teams can choose from a range of databases and other services to pool and analyze data. For example, AWS has Amazon Aurora, Relational Database Service, Redshift, DynamoDB and more. Public cloud metrics are vital for tracking the throughput, performance and utilization of database services.

Since a database is basically a workload that is already installed and running from the cloud provider, administrators and developers may also be able to access a complete suite of additional CPU, disk, I/O and network metrics for the database compute instance, such as the compute instance metrics discussed earlier. This creates additional transparency and enables users to see what's happening on the cloud provider's side.

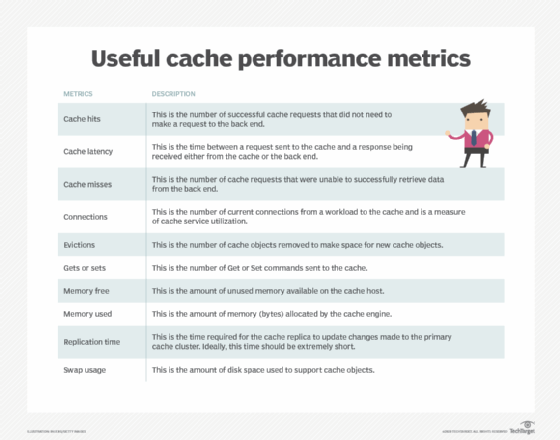

Cache instances

Cloud providers offer hosted and managed in-memory cache services. The goal is to use memory to hold frequently accessed data without needing to access slower services such as disk storage. This enables better throughput and lower latency for critical workloads.

For example, AWS offers the ElastiCache service and Google has Memorystore, both of which support Redis and memcached caching engines. These metrics report on the cache performance along with the performance of the workload using the cache.

As with databases, a cache is basically a workload that is implemented and supported by the cloud provider, and additional metrics such as CPU, disk, I/O and network metrics may also be available for the cache compute instance.

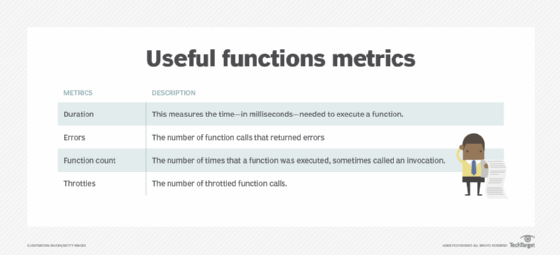

Functions

Developers use serverless computing services -- such as AWS Lambda, Azure Functions and Google Cloud Functions -- to implement small workloads, called functions, without the need to deliberately provision and pay for compute instances. They simply upload code and trigger parameters. The cloud provider automatically loads, executes and then unloads the function when trigger parameters are met.

Shift from metrics to KPIs

Gathering metrics from a cloud provider can reveal vital details about the provider's resources and services, but it generally does little to tell a business how well a given workload is actually running. A business may wish to perform application performance monitoring or user experience monitoring to make those business- or workload-specific determinations.

The shift from metrics to KPIs usually comes when businesses use metrics to calculate or derive business-centric details that are not directly available from the cloud provider. Separate tools such as Amazon QuickSight may be required to gather underlying metrics and implement calculations to create desired KPIs. There are countless KPIs that may interest a business, but some common KPIs may include:

- Application availability. Calculate availability by monitoring instance health metrics and calculating a percentage of healthy versus unhealthy responses over time.

- Error rates. Calculate error rates by monitoring workload or service responses over time and calculating a percentage of successful -- and unsuccessful -- operations over a certain period.

- Latency. Calculate application latency by measuring the time needed for a request to the application to elicit a response from the application. The average latency of each request can be calculated over time.

- Throughput. An application's throughput can usually be calculated by monitoring the number of successful operations or transactions completed over a period of time.

Migration KPIs

Businesses often calculate KPIs for strategic business tasks, such as cloud migrations. Business metrics for cloud migrations are not readily or directly available but still must be calculated or inferred for business analysis. For example, a business can calculate the time needed for a migration, the cost of a migration process, downtime and so on.

Public cloud KPI tools

Cloud architects need the right tools to gather public cloud metrics and derive KPIs. Public cloud providers offer monitoring services that can track and report many common metrics.

For example, IT teams can calculate trends by using Amazon CloudWatch to gather metrics, logs and events. This information helps monitor application health and performance, optimize resource usage and more. Azure Monitor and Google Cloud Platform also have native monitoring and reporting tools -- such as Azure Monitor and Google Cloud Operations -- for everyday cloud metrics and cloud cost management.

However, none of these tools include a simple way to translate native metrics into business-oriented KPIs. For example, a cloud provider has no way of knowing whether a business wants to receive KPIs such as cost per transaction, or churn rate or countless other parameters that help make public clouds useful to businesses. IT teams need to utilize additional native and third-party tools to calculate and derive meaningful KPIs. A small sampling of potential tools for KPIs and data analytics includes:

- Amazon QuickSight. Users can compose custom KPIs from available data types and fields.

- Cloudability. This is a cloud management platform that focuses on cost issues, bringing cloud cost management, visibility and optimization to enterprise users.

- CloudCheckr CMx. This provides asset inventory, resource utilization, cost management, security and automation for cloud management.

- Datadog. This aggregates and tracks metrics and events across the full enterprise stack, including SaaS and IaaS, automation tools and monitoring and instrumentation.

- Google Data Studio. This can help businesses collect data from myriad different sources and then visualize that data through charts, tables and more.

- Microsoft Power BI. This offers data-based analytics for business decision-making.

- Turbonomic. This application resource management tool uses AI to help users achieve consistent and long-term cloud performance while maintaining business policies and containing cloud costs.

- VMware CloudHealth. This enables users to analyze and manage cloud use, security and cost with a focus on governance and compliance.