What is a private cloud?

What is a private cloud?

Private cloud is a type of cloud computing that delivers similar advantages to public cloud, including scalability and self-service, but through a proprietary architecture. A private cloud, also known as an internal or corporate cloud, is dedicated to the needs and goals of a single organization whereas public clouds deliver services to multiple organizations.

How do private clouds work?

A private cloud is a single-tenant computing infrastructure and environment, meaning the organization using it -- the tenant -- doesn't share resources with other users. Private cloud resources can be hosted and managed by the organization in a variety of ways. The private cloud might be based on resources and infrastructure already present in an organization's on-premises data center. Conversely, a private cloud may be implemented on new or separate infrastructure, which is provided by the organization or a third-party organization. In some cases, the single-tenant environment is enabled by solely using virtualization software. In any case, the private cloud and its resources are dedicated to a single user or tenant.

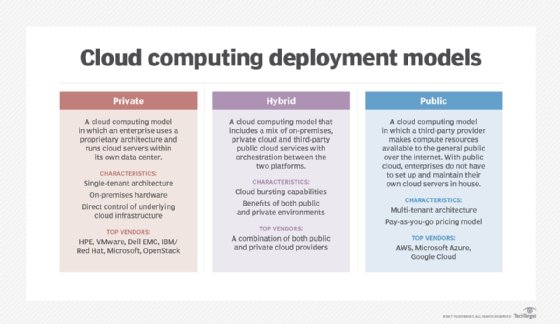

The private cloud is one of three general models for cloud deployment in an organization: public, private or hybrid. There's also multi-cloud, which is any combination of the three. All three models share common basic elements of cloud infrastructure. For example, all clouds need an operating system to function. However, the various types of software -- including virtualization and container software -- stacked on top of the operating system determine how the cloud will function and distinguishes the three main models.

What is the difference between private cloud vs. public cloud?

A public cloud is where an independent third-party provider, such as Amazon Web Services (AWS) or Microsoft Azure, owns and maintains compute resources that customers can access over the internet. Public cloud users share these resources, a model known as a multi-tenant environment. For example, various virtual machine (VM) instances provisioned by public cloud users may share the same physical server, while storage volumes created by users may coexist on the same storage subsystem.

The private cloud fundamentally removes this sharing aspect of cloud computing, instead dedicating infrastructure and services to a single user. This is most easily and effectively accomplished by a business building its own private cloud. The goal is to provide the business with cloud-like flexibility, scalability and self-service while ensuring that only the business can use those private cloud resources.

Private clouds are often deployed when public clouds are deemed inappropriate or inadequate for the needs of a business. For example, a public cloud might not provide the level of service availability or uptime that an organization needs. In other cases, the risk of hosting a mission-critical workload in the public cloud might exceed an organization's risk tolerance, or there might be security or regulatory compliance concerns related to the use of a multi-tenant environment. In these cases, an enterprise might opt to invest in a private cloud to realize the benefits of cloud computing while maintaining total control and ownership of its environment.

However, public clouds do have advantages. A public cloud offers an opportunity for cost savings through the use of computing as a utility, meaning customers only pay for the resources they use. Public cloud can also be a simpler model to implement because the provider handles most of the infrastructure responsibilities.

Consequently, organizations that implement a private cloud face all of the ownership and management burdens present in a traditional data center, such as power, cooling and hardware costs. Private clouds also face practical limitations in scalability and services because a single business might not have the finances or technical expertise to implement a full-featured cloud for private use.

What is the difference between private cloud vs. hybrid cloud?

A hybrid cloud is a model in which a private cloud connects with public cloud infrastructure, enabling an organization to orchestrate workloads -- ideally seamlessly -- across the two environments. In this model, the public cloud effectively becomes an extension of the private cloud to form a single, uniform cloud. A hybrid cloud deployment requires a high level of compatibility between the underlying software and services used by both the public and private clouds.

This model can provide a business with greater flexibility than a private or public cloud because it lets workloads move between private and public clouds as computing needs and costs change.

A hybrid cloud is suitable for businesses with highly dynamic workloads, as well as businesses that deal in big data processing. In each scenario, the business can split the workloads between the clouds for efficiency, dedicating host-sensitive workloads to the private cloud and more demanding, less specific distributed computing tasks to the public cloud.

In return for its flexibility, the hybrid model sacrifices some of the total control of the private cloud and some of the simplicity and convenience of the public cloud.

Advantages of a private cloud

The main advantage of a private cloud is that users don't share resources. Because of its proprietary nature, a private cloud computing model is best for businesses with dynamic or unpredictable computing needs that require direct control over their environments, typically to meet security, business governance or regulatory compliance requirements.

When an organization properly architects and implements a private cloud, it can provide most of the same benefits found in public clouds, such as user self-service and scalability, as well as the ability to provision and configure VMs and change or optimize computing resources on demand. An organization can also implement chargeback or showback tools to track computing usage and ensure business units pay only for the resources or services they use.

In addition to those core benefits inherent to both cloud deployment models, private clouds also offer the following advantages:

- increased security of an isolated network;

- increased performance due to resources being solely dedicated to one organization; and

- increased capability for customization, such as specialized services or applications that suit the particular company.

Disadvantages of a private cloud

Private clouds also have some disadvantages. First, private cloud technologies -- such as increased automation and user self-service -- can bring considerable complexity to enterprise IT. These technologies typically require an IT team to rearchitect some of its data center infrastructure as well as adopt additional software layers and management tools. As a result, an organization might have to adjust or even increase its IT staff to successfully implement and maintain a private cloud. Private clouds can also be expensive; often, when a business owns its private cloud, it bears all the acquisition, deployment, support and maintenance costs involved.

Hosted private clouds, while not outright owned by the user, can also be costly. The service provider takes care of basic network maintenance and configuration in a hosted deployment, which means the user needs to subscribe and pay regularly for that offered service. This can end up being more expensive than the upfront cost of complete ownership in the long run and sacrifices some of the control over maintenance that complete ownership guarantees. Although users will still be operating in a single-tenant environment, providers are likely serving multiple clients and promising them each a catered, custom environment. If an incident occurs on the provider's end -- an improperly maintained or overburdened server, for example -- users may find themselves facing the same problems the public cloud presents: unreliability and lack of control.

Types of private clouds

Private clouds can differ by how they're hosted and managed, providing different functions depending on the needs of the enterprise:

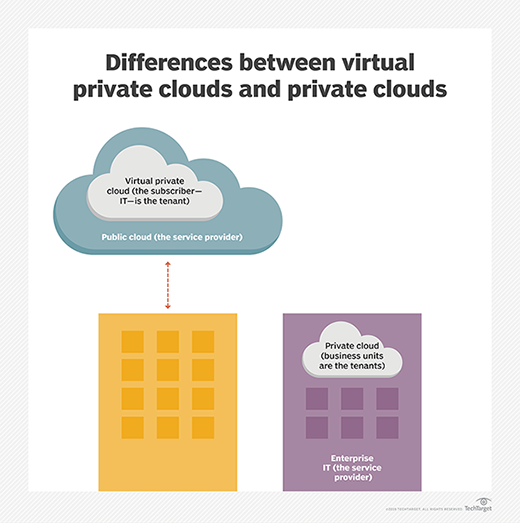

- Virtual. A virtual private cloud is a walled-off environment within a public cloud that enables an organization to run its workloads in logical isolation from every other user of the public cloud. Even though the server is shared by other organizations, the virtual logic ensures that a user's computing resources are private. Organizations can use a virtual private cloud to enable hybrid cloud deployment.

- Hosted. In a hosted private cloud environment, the servers aren't shared with other organizations. The service provider configures the network, maintains the hardware and updates the software, but the server is occupied by a single organization.

- Managed. This environment is simply a hosted environment in which the provider manages every aspect of the cloud for the organization, including deploying additional services such as identity management and storage. This option is appropriate for organizations that don't have staff equipped to manage private cloud environments alone.

The above list categorizes different types of private clouds by the way they're hosted and to what extent they're managed by the provider. Infrastructure is also a way to categorize different types of private clouds, such as the following:

- Software-only. The vendor provides only the software necessary for running the private cloud environment, which runs on an organization's preexisting hardware. A software-only option, such as OpenStack, is often used in highly virtualized environments.

- Software and hardware. Some vendors sell private clouds as an all-in-one bundle of hardware and software. It's generally a simple platform that exists on the user's premises and may or may not be provider-managed environments. Examples include HPE GreenLake and Azure Stack.

Major private cloud vendors

A private cloud is commonly deployed on premises in much the same way a business would build and operate its own traditional data center. However, an increasing number of vendors offer private cloud services that can bolster or even replace on-premises systems.

Some of the largest players in the private cloud market include the following:

- AWS. Amazon Virtual Private Cloud lets users launch AWS resources in an isolated virtual network -- either on premises or through a remote managed provider -- to create a private instance of public AWS resources.

- Cisco. The vendor provides its Quickstart Private Cloud to create a self-service private cloud environment along with varied platforms, including Cisco Firepower, Cisco ASAP Data Center, Cisco Secure Workload and other tools for optimization, container management and application performance management.

- Dell EMC. In addition to cloud management and cloud security software, Dell EMC offers virtual private cloud services through its Project Apex cloud console.

- Google. The tech giant offers a virtual private cloud product that enables highly customizable network environments for hosting public or private workloads, along with access to Google services such as storage, analytics and machine learning.

- HPE. The vendor's GreenLake offering provides a set of cloud services compatible with VMware, OpenStack, SAP and other components.

- IBM. IBM offers private cloud hardware, along with its Cloud Managed Services, cloud security tools and cloud management and orchestration tools. IBM now owns Red Hat with its private cloud capabilities.

- Microsoft. Azure Stack helps build and run applications across data centers and edge locations to remote offices or even the public cloud.

- Oracle. Private Cloud Appliance X8 by Oracle enables compute and storage capabilities optimized for private cloud deployment.

- VMware. Private, hybrid and multi-cloud products include capacity management, security, container support, application modernization and hyper-converged infrastructure tools. VMware also offers its vRealize Suite cloud management platform, Cloud Foundation and Software-Defined Data Center platform for private clouds.

Managed private cloud pricing

As mentioned, operating a private cloud on premises is generally more expensive upfront than using a public cloud for computing as a utility. This is due to back-end maintenance expenses that come with owning a private infrastructure and the capital expense of implementing one. However, a managed private cloud can mitigate those costs and, in some cases, even be cheaper than a standard public cloud implementation.

Vendors offer a few different pricing models for managed private clouds. The pricing model and price itself can vary depending on the private cloud hardware and software offered and the level of management provided by the vendor. Often the pricing is based on packages of hardware, software and services that can be used in private cloud deployments. For example, VMware prices its virtualization platform vSphere using a yearly subscription and support model, with one yearly price for a basic subscription or a slightly higher price for a production-level subscription and a flat license fee.

Rackspace, in partnership with HPE, offers a pay-as-you-go model for its private cloud, charging end users on a service-to-service basis. The popularity of this pricing model is growing due to rapid expansion in the cloud-based infrastructure market, fostering the need for a more flexible and efficient pricing model.

Understanding pricing models for managed private cloud deployments can get complicated. Many vendor websites don't offer a straight-ahead private cloud package. Instead, they sell a spectrum of different hardware, software and services that a company can use to deploy a private cloud. Often, the pricing for these products isn't made explicitly clear on vendor websites, and buyers are prompted to speak with a salesperson once they've reached the part of the website that focuses on purchase intent. This is likely because private clouds -- and managed clouds, especially -- need to be specifically tailored to an organization's needs. Buyers should understand which business processes specifically require the cloud and why they need flexible and scalable cloud infrastructure so that they can make an informed choice with the vendor on the proper cloud deployment and the products that form it most efficiently.

Learn how chief data officers are tackling data management in the cloud as how public, private and hybrid cloud advancements are changing.